李国齐: Spiking Neural Networks: from small networks to Large Models

Research background

Transformer(Google). openai: GPT

scaling law: is endless model scalin the correct approach to achieving AGI?

如何找到 scaling lwa 之外可持续驱动当前 ai 系统到新阶段的 AGI 系统?

power issue for today's AI system: 功耗随着性能表现提升而指数增长.

human brain vs current large models: 人脑以远超大模型的参数量, 仅消耗 20W 的功率(大模型 300KW). 如何借鉴大脑的机制

overreliance on transformer architecture:

-

advantages: 1. exceptional performance; 2. high parallelism

-

disadvantages: 1. quadratically with sequence length 2. linear growth in time and space complexity 3. challenges in handling ultra-long sequences

how can neuroscience contribute to the foundational theories of next generation ai? ai 的发展速度显著超过了神经科学的.

brain inspired large model architechture(类脑大模型架构)

-

at present large models have poor bio plausibility and fail to exploit the rich multi scale dynamic features of brain networks such as somatic dynamics and dnedreitic dynamics multi scale memory functions.

-

large models do not yet reflect the characteristics of 0-1 spike communitcation /eventdriven mechanism / dynamic computing /sparse addition. which are important foundations for

-

large models do not fully exploit the neuron diversity neuron encoding diversity resulting in insufficient generalization ability (such as continous learning multi task zeroshot learning. )

神经元本身是具有多样性结构的. 但是如何体现在大模型中?

-

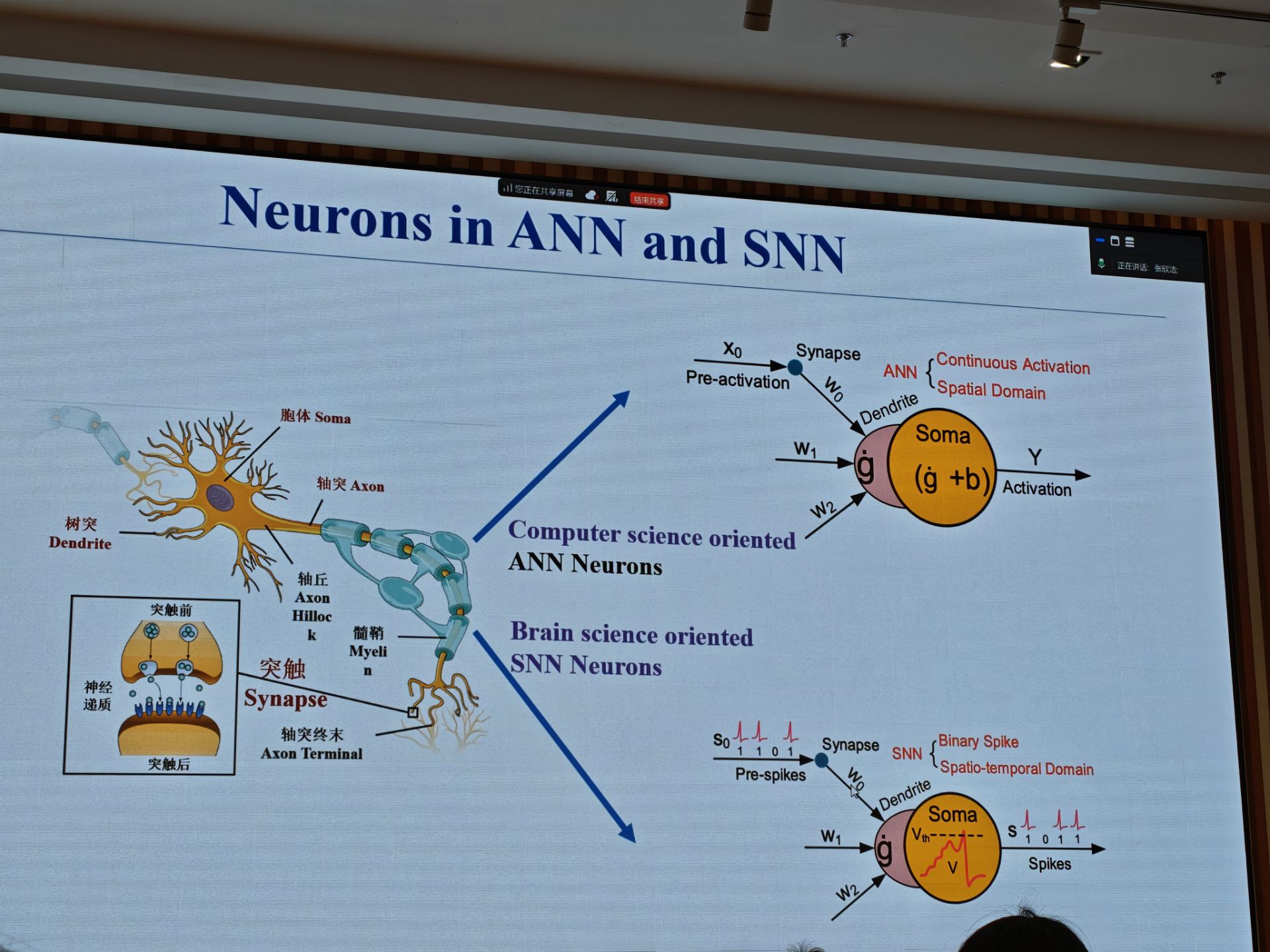

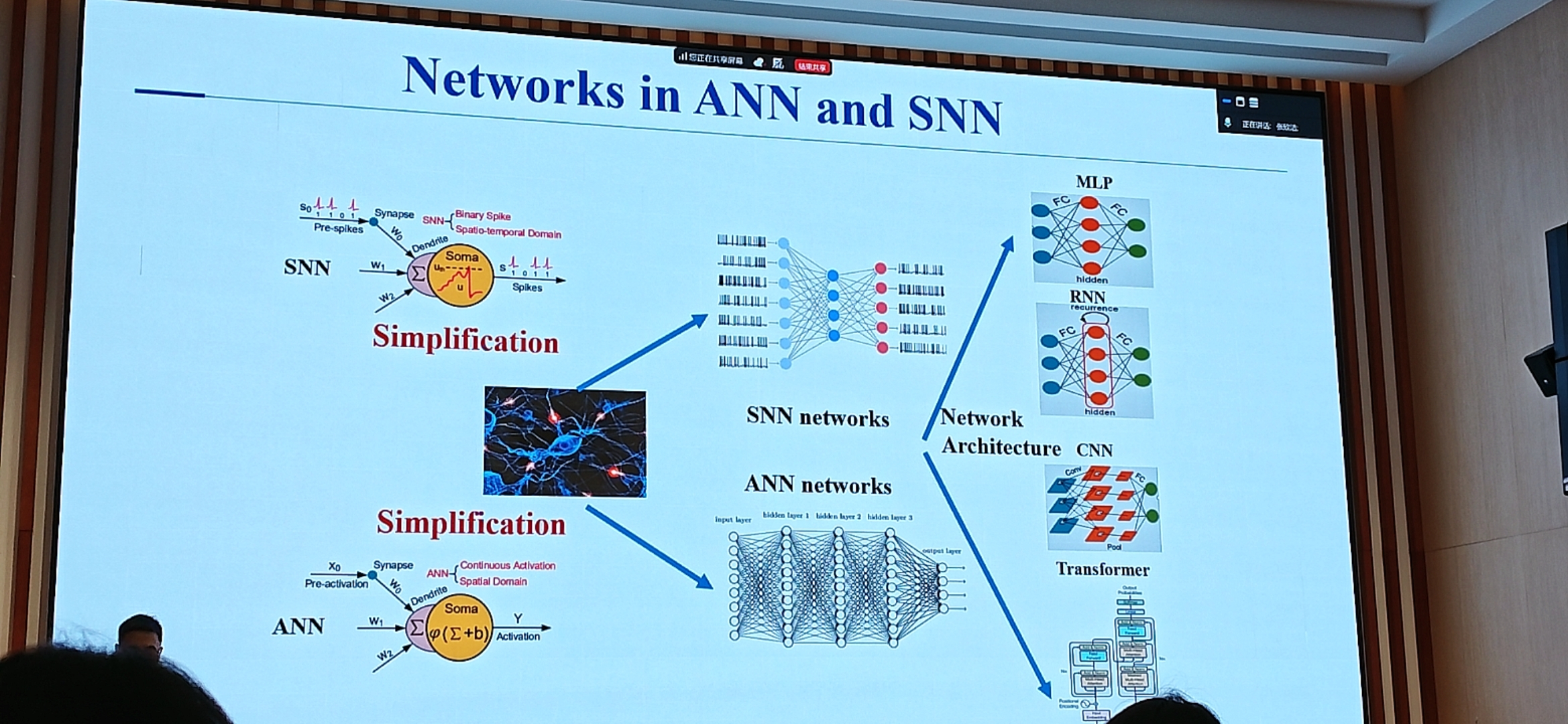

snn(spiking neural networks): mainstream network in brain-inspired intelligence

-

ann(artificial neural networks): mainstream network in deep learning.

snn = ann + neuronal dynamics

problems:

- how to propose a suitable nrueonal model?

- how to build a network to solve real world ai tasks?

LIF neuron: simple and easy to use. rich biological dynamics.

大规模时, 同步计算的困难.

how to buikd spiking neural networks?

limited in scale and performamce due to the lack of large scale learning algorithms.

back-propagation. difficult to optimize deep snn networks.

Key Problems

-

大模型时代: 为了提升性能, 存在不只是堆参数的方法吗?

-

从大模型出发,

dendritic computation

dendritic spiking nrural networks: 1. intrinsic complexity...

current large models: based on external complexity(scaling law driven)

new approach: internal complexity(intrinsic complexity driven)

-

parallel dendritic spiking neuron model. 树突计算, 神经动力学计算, 并行神经元计算.

-

event driven linear self attention spiking networks for large models(线性增加或许就是性能的极致了?)

-

how to accelerate the training speed of snn large models? 在 gpu 上 snn 依赖的算子(decay...) 实现的效率较低.

model & algorithm -> training platform -> software tool chain ...

Research Progress

significant performance improvement for snns.

2019: snn cant be trained on ImageNet

2025: snn is comparable to ann's sota level, and the energy effiency is increased by 5-30 times.

main challenge in snns:

-

complex spatiotemporal dynamics(复杂时空动力学)

-

binary spike representations

spike 信号不可导. (quantization error)

surrogate gradient error

- training acceleration.

the Reset mechanism

triton framework(adapted on gpu)

How to train large-scale snn effectively and efficiently?

STBP method: spatio temporal back propagation. 解决不可微分的问题. 估计梯度使得可在网络中传播.

gradient approximation.

TDBN method:

how to prove that the TDBN approach prevents vanishing/exploding gradients in deep snns?

direct training snn: MS-ResNet (membrane shortcut ResNet)

attention snns: multi dimensional attention:

spike-driven transformer. new computing operation(only involves mask and addition)

-

V2: versatility. handle image classification, object detecction. semantic segmentation concurrently.

-

V3: binary spike firing is a mechanistic defect. integer training, spike YOLO for Object detection.

SVL: spike-based vision-language pretraining framework

open-source training platform for snns: 惊蛰(spiking jelly)

asychronous sensing-computing neuromorphic chip.

spike-based dynamic computing with asynchronous sensing computing neuromorphic chip

static power: 0.41mw; typical power: 1-5 mW.

network model with internal complexity bridges artificial intelligence and neuroscience.

spikeGPT model based on snn.

snn based on large models: spikeLM (ternary snn).

snn based large models: SpikeLLM. solved the problem of outliers in the neuronal state by varying time step of spiking neurons

Unified Linear Model Frame work: MetaLA

unifies linear transformer, ssm, linear rnns.

spike based neuromorphic large models on fpga.

brain inspired edge devices (traditional vision tasks) <-> brain inspired cloud(nlp/generative tasks).

Michael Hausser

mailto:hausser@hku.hk

neural codes:

-

spatial codes. identity; spatial patterns; number(sparse or dense)

-

activity codes

the cortex exihibits high derees of variability, so is this noise or information(signal)?

we cant record all neurons in the brain simultaneously.

-> strategy: introduce a defined small amount of noise. 1 extra AP in a neuron.

- single cell io curve: prob of an extra spike.

p * K = 0.019 * 1500 = 28 spikes / spike(28 \pm 13)

28**5 \sim 17 000 000. -> single neuron can have a huge impact.

patch-clamp and silicon probe recordings in vivo.

-

single-neuron pertubations grow and grow fast.

-

the cortex is highly sensitive to noise

-

the neural code must be robust to perturbations.

use light to build the model of neurons. advantages: non-invasive; inert; precise; multiplexable; targetable.

two revolutions using light to probe neural circuits.

-

record (ohki nature 2005)

-

manipulate(boyden nature 2005)

the challenge: combine recording(记录) and manipulation(操控).

conventional optogenetics: unknown numbers of activated cells/spatial distribution of activated cells; cells targeted by genetic not functional identity; synchrony across the network.

replaying the neural code with light(hausser & smith 2007): calcium sensor channel rhodopsin

all-optical toolkit

two-photon optogenetics of dendritic spines and neural circuits.

spatial light modulator(slm): a programmable beam splitter for digital holography.

microsope design.

optical insertion of single spikes in single neurons.

conceptual goals: read & write;

the state of the art: subcellular connectivity probing across brain.

single units and sensation: a neuron doctrine for perceptual psychology? (H B barlow 1972)

how many neurons are sufficient to generate a sensory percept? 1 neuron & <100 neurons.

psychometric curve between neural activity and behaviour

P(Lick) - number of activated target neurons.

all-optical interrogation of layer 2/3 pyramidal cells in mouse visual cortex.

estimating brain state from pupillometry and neuronal synchrony.

Tuning curve(orientation)

the impact of photo stimulation depends on brain state and on task difficulty

causal evidence for a role of hippocampal place cells in spatial navigation. (place cells in hippocampal CA1)

strategy for causal manipulation of place cells in hippocampal ca1 (reward zone)

lick rate-virtual space() 调制的刺激使得细胞在错误的位置提前激活(misleading).

a subpopulation of decision neurons in a primary sensory area activating decision neurons can influence behavior.

the effect of phooto stimulation on behaviour depends on behavioral state and task difficulty.

real-time boosting or disruption of activity.

管吉松: 皮质记忆网络与智能

理解记忆的生物学本质.

记忆源于神经元连接的修饰. firing together, wiring together(Hebb's rule)

Hopfield: 神经网络突触可塑性可以重现记忆.

Eric Kendall: 记忆过程出现突触修饰(LTP)

记忆的结构: 记忆是分布式存储的. 切割大脑部分区域: 记忆并不单独存在于一个区域.

记忆的关键脑区: 海马体, 杏仁核. 在海马和内嗅皮层发现位置细胞, 网格细胞.

工作记忆:前额叶皮层. 多巴胺神经元编码学习.

能够增强记忆或者消除记忆吗?

- 一个具体的记忆是以什么形式编码的?

- 记忆如何提取?

大脑对于语音的体验. 语音自动分割, 信息感知增强

大脑记忆编码对于学习 odnms 任务的反应:

Odor 1 -> delay -> Odor 2 -> reward/no reward

相同气味: no reward; 不同气味: reward.

图式记忆: 记忆编码形成确定轨迹, 时空组合对应时间.

记忆编码路径的特定采样空间中, 接受并分离其它单元的感知/记忆信息.

记忆编码出现在大脑皮层的 2-3 层.

context specific cellular response.

皮层上的记忆印迹(VIS, RSP, SS, MO): 功能性验证记忆印迹细胞存在皮层的 2/3 层.

记忆印迹细胞受到表观修饰调控.

- 长期记忆学习: ampk 磷酸化

- 突触蛋白可变剪切 ...

记忆印迹如何存储信息:

250 ms vs 15 s 光信号刺激时间间隔.

Ca2+ 信号记录显示记忆印迹细胞在事件发生后形成.

事件造成的记忆编码, 发生在行为改变之前.

皮层的记忆印迹编码是海马通过长程作用形成的: 抑制/激活 海马 DG 阻止/产生 了视觉皮层记忆的编码.

皮层记忆印迹网络

LFP: 局部场电位(cell ensemble 的活动)

inhibitory neuron & LFP.

海马介导的记忆存储依赖于皮层 gamma 同步信号. 学习阶段, 皮层间的 gamma 同步需要海马的参与.

神经震荡同步可能通过局部抑制性神经元改写编码轨迹.

同步机制完全代偿了海马在记忆存储编码中的功能. 切除海马后, 在训练过程中 induced synchronized oscillatory signal, 海马损伤的小鼠仍然能完成记忆任务.

刺激 or 同步? 如果给同步信号, 学习很快; 给随机信号则慢.

记忆研究范式:

- 条件反射

- 场景/空间-线索记忆

关键问题:

- 解读记忆中所存储的具体内容;

- 发现记忆编码的开关机制, 增强对困难信息的学习能力

皮质记忆印迹的而信息编码本质: 跨脑区和闹区内, 总体的连接降低, 但是跨脑区间的连接增强.

多脑区神经 IEG 记录.

II-III 之间增强.

跨区连接与皮层记忆印迹.

阻断跨区连接会抑制记忆的形成. 记忆的存在通过跨脑区协同约束了编码的自由度.

皮层单元协同编码的信息量.

理解学习信号.

- ann 学习依赖于 bp.

- 皮层网络的学习依赖于 预测误差. 跨区 gamma 同步强度变化 等同于 学习的预测误差信号.

人脑中的一致性验证

阻断以及重塑性学习信号. 阻断 LEC 介导的跨区 gamma 同步, 阻碍了记忆形成.

皮层同步决定复杂任务记忆形成能力: 抑制 LEC layer 5 神经元, 会阻止复杂任务的学习. 相反地, 增强 LEC layer 5 神经元的活动, 会提升复杂任务的学习速度.

局部网络特征与 E-I 集团(抑制性神经元/同步性调节与记忆印迹群体).

复杂声音(两段声音, 三段声音) 的记忆几乎无法做到. 而增强脑区间同步, 可以对记忆能力进行增强.

记忆印迹, 整合信息富集于 engram 为核心的环路. 学习新知会在第一时间触发新的 engram. engram 网络渐渐变弱而只留下 trace.

连续吸引子网络和吸引子网络. energy landscape.

RSC 癫痫造成灵魂离体的感觉.

memory-based recognition:

- 记忆是可以人为加速的

- 即可通过脑测量定量

Tatyana O Sharpee: Information theory of neural circuits and behavior

information theory framework:

-

statistics of natural inputs, interpretation using information theory

-

allocation of resources between different sensory systems, neuronal types, and ion channels within cells

-

implications for decision making strategies in different regimes based on sensing and computing costs

-

Examples

natural stimuli are correlated in space and time (白噪声 vs 自然场景). 对于功率谱 S(K) 和 log S(K) 呈现形状可能的解释:

natural stimuli are scale-invariant. D.L.Ruderman and W Biale, Phys.Revs.Lett. (1994)

通过调制 white noise 的频谱, 可使得 noise 的 statistics 和 natural scenes 接近. 对于 nature scenes(visual, auditory, olfactory) 来说, two-point stastics 是不够的.

Natural stimuli are non-gaussian.

Natural stimuli often can be approximated as mixtures of Gaussians with different variance.

这和 fourier 变换有关系吗?

Asymmetries in the structure of natural visual stimuli between on and off channels. (on 和 off channel 是指的? )

ON 通道(ON pathway / ON channel): 当视野中某个位置 由暗变亮(luminance increment) 时放电增加

OFF 通道(OFF pathway / OFF channel): 当视野中某个位置 由亮变暗(luminance decrement) 时放电增加

自然环境中, 暗背景+亮物体更常见. 阴影造成的 OFF 边缘更陡峭

Hierarchical data -> hyperbolic geometry

$$ \mathrm{d}s^{2} = \frac{\mathrm{d}x^{2} + \mathrm{d}y^{2}}{y^{2}} $$

Why olfaction is difficult?

representing 3D hyperbolic space with Poincare sphere.

entropy, trees

徐宁龙: Neural circuit computations for generalization and rule switching in decision-making

a fundamental form of computation: information integration.

[sensory input, internal models] -> perception & judgements -> motor actions

reach an intuitive understanding before constructing a precise quantitative model.

computational theory: what is the goal of computation, why is it appropriate, and what is the logic of the strategy by which it can be carried out?

representation & alorithm: how can this computation theory be implemented? what is the representation for the input and output, and what is the algorithm for the transformation?

hardware implementation: how can the representation and algorithm be realized physically?

perceptual inference: 只要见过一次清晰的图像, 对于模糊化处理的图像也能立刻识别, 而在此之前只会表现出困惑. (integration of information)

prior knowledge & sensory input -> perceptual inference [Example: 黑白棋盘]

law of effect:

the concept of mental models

Latent learning: a form of learning, that is not immediately expressed in an overt response. It occurs without any obvious reinforcement of the behavior or associations that are learned. 和观察学习有关的机制

Evidence from animal psychology: meta-learning(高于学习的学习)

bottom-up construction of sensory perception: the feedforward model.

decision-making was largely simplified as a feedforward preocess.

evidence accumulation in decision-making. Rats and Humans Can Optimally Accumulate Evidence for Decision-Making.

task uncertainty was dominated by sensory noise but not cognitive uncertainty.

PPC activity carries out complex and diverse functions.

PPC damage: lacking knowledge or lacking sensation?

ppc activity in monkey correlates with ecidence accumulation.

Mice learn the concept of high(32khz) and low(8khz) tones and make decision by generalization.

testing tones(30% of all trials)

generalization is essential for perceptual decision making (abstraction)

sensory input & learned ->

decision making requires generalization and depends on PPC. feedback.

negative rusults for the causal role of PPC.

Flexible decision-making: rule switching. Wisconsin Card Sorting Test.

- Rule inference: Use feedback to infer the current hidden rule(color, number, shape).

- Generalization: Apply the inferred rule to new stimuli until teh rule changes

Internal model based updating

A rule-inference flexible decision making task.

A belief-state RL model to capture rule-inference

OFC feedback circuit implements feedback-based rule inference

Both generalization and inference require information integration. Sensory input & Rule/Category -> Choice

Dendritic computation as a powerful mechanism for information integration.

Previously: Dendritic computation for active tactile perception.

Internal models to generate

Dendritic activity encodes task rules.

Shan Yu: Understanding the brain through the lens of AI, and vice versa

大脑启发 ai 的不同层面:

- 学习层面: 系统如何学会做事?

- 计算层面: 系统在做什么计算?

- 算法层面

- 物理实现层面:

von Neumann architecture:

神经递质的随机释放.

Dropout 算法: 相比全连接网络, dropout

sigma 超临界状态:

neuronal avalanches

critical state and brain states fluctuation

batchNorm

自组织临界状态解决梯度消散问题

什么是智能?

灾难性遗忘: 任务1 -> 任务 2 -> 任务 1 表现差.

受突触可塑性启发克服灾难遗忘: 正交权重修改算法.

ann 网络的类前额叶模块.

concept layer; ca1 layer.

周昌松: Hierarchical Modular Organization in the Brain(脑中的分层模块化组织)

Segregation, integration, and balance underlying cognitive diversity

eigenmodes of networks: linking structure to function

nested spectral partition(nsp): applied to laplace matrix of SC(structural connectivity) and FC(functional connectivity)

汪小京: From the concept of cognitive-type local circuits to distributed cognition in a large-scale neocortex

research on PFC(prefrontal cortex) took off in the 1990s. Arnsten Cerebral Cortex 2023

PFC plays a critical role in intelligence. language is primarily a tool for communicatioin rather than thought.

the prefrontal cortex as a equint essential cognitive type neural circuit working memory and decision making.

working memory: cue(food for right) -> delay -> response

decision making: value-based(economical choice)/ perceptual

a cognitive type microcircuit model: woking memory and decision making. slow reverbrating dynamics(spontaneous symmetry breaking with ga = gb).

-> evidence accumulation and categorical choice. even the input is removed, choice is kept on A/B which is called working memory. (NMDAR dependence)

working memory and decision making emerge with sufficiently strong recurrent connections. firing rate-synaptic strngth relative to a baseline.

PFC dependent cognitive building blocks.

distributed working memory and decision making.

from local circuits to large brain systems: felleman and van essen 1991

brain-wide representations of behavior spanning multiple timescales and states in C.elegans.

everything everywhere all at once: Decision making signals engage entire brain.

FLNs decay exponentially with distance. spatial embedding of structural similarity.

Markov-Kennedy connectivity matrix is distance-dependent and dense(~67%) [nature neuron 2024]

canonical local cortical circuit. RJ douglas and KA martin 2012: our view is that the rapid evolutionary expansion of neocortex has been made possible by building an isocortex a structure that uses repeats of the same basic local circuits throughout a single cortical sheet.

a measure of synaptic input per neuron along the hierarchy.

a dendritic disinhibitory circuit mechanism for pathway-specifc gating.

a connectome-based model of macaque cortex

- local network model is takenas that of v1 from binzegger

- connection weights of inter areal inpputs to each area are given by fln_ij

- strngth of excitation in each area is in proportion to spine count h_i

time constant ~10 ms.

neural integrators with long time constants.

a large-scale working memory model with directed and weighted inter-areal connectivity.

bifurcation in space: gap both in macaque and mice